Objective Lethality: Why the Infantry Must Embrace a Culture of Metrics

By 1stLt Andrew Danko

In the profession of arms, gut feelings are not enough.

We operate the most technologically advanced weapons and equipment in the world, measured with exacting precision. Yet, when we evaluate the combat effectiveness of our most critical asset—the infantry Marine—we often revert to ambiguous, subjective assessments.

We are leaving lethality on the table.

To forge a superior force, the Marine Corps infantry must shift from a culture of subjective opinion to one of objective evaluation. The path to achieving this transformation is scalable and achievable, but must begin at the lowest levels of leadership.

Part I: The Limits of Pass/Fail — Our Current State

The Marine Corps’ current framework for ensuring combat readiness is built upon the Training and Readiness (T&R) Manual for each occupational specialty. For the infantry, NAVMC 3500.44D dictates these standards. This manual outlines the “Core Capabilities” a unit must possess and breaks them down into thousands of individual and collective training “events.” Each event includes a corresponding Performance Evaluation Checklist (PECL) that outlines the conditions for successful completion. In theory, this creates a standardized system. In practice, it fosters a “check-the-box” culture where the evaluation is overwhelmingly binary: a unit is graded as “Trained” or “Untrained.”

This system’s fundamental flaw is its failure to provide leaders with the data needed for genuine performance management. At the individual level, a highly motivated 0331 Section Leader who can disassemble and reassemble an M240B blindfolded receives the same “Trained” checkmark as a brand-new cross-trained 0311 machine gunner who struggles through the task with a technical manual. At the unit level, a Rifle Company “completes” a live-fire T&R task simply by executing it, often with no minimum target accuracy requirement tied to the grade. Even if a forward-thinking commander collects this data, the information exists in a vacuum.

The result is that this pass/fail model robs us of four crucial capabilities that are the very purpose of collecting metrics:

Establishing a Baseline: The checkmark tells us a Marine passed, but it doesn’t tell us how well. Without collecting data on the initial attempt—like the time to disassemble a weapon or a unit’s initial hit percentage on a range—we have no objective baseline from which to measure proficiency. We cannot distinguish between “barely competent” and “mastery.”

Tracking Improvement: Without a baseline, we have no mechanism to tangibly prove an increase in proficiency over time. Improvement remains anecdotal. We cannot definitively state that a Marine’s reload time has decreased by 15%, or that a platoon’s accuracy has improved by 25% over a training block.

Validating Training: If we cannot track improvement with hard data, we can never objectively validate the effectiveness of a training plan. A commander who develops a new shooting package feels it is better, but he cannot prove it with statistics, making it difficult to justify its adoption by other units.

Establishing Meaningful Standards: Without collecting and storing data, we cannot compile averages to create data-driven standards for individuals (by rank and billet) or units (by squad, platoon, etc.). The ability to compare Company A to Company B, or to see how one’s fire team leaders stack up against the battalion average, is lost. This limitation prevents the identification and proliferation of best practices across the force.

This culture of subjective evaluation extends to our highest level of pre-deployment certification. The Expeditionary Operations Training Group (EOTG), while invaluable, relies on an evaluation methodology that still lacks the quantifiable data needed to prove, for example, that one MEU’s raid force is 15% faster—with 10% less simulated casualties sustained—at securing an objective than the MEU that deployed before it. We are left with a system that tells us if we are “trained,” but not how well, how much we have improved, or how we truly stack up against our peers.

Part II: The Micro-Level Solution — Proving the Concept

The answer to the limitations of the T&R system is not to discard it, but to augment it with a culture of data that begins at the platoon level. As a platoon commander for 2nd Platoon, Kilo Company, 3rd Battalion, 6th Marines, I put this theory to the test. We moved beyond asking “did we pass?” and started asking “how well did we do, and how can we prove we’re getting better?”

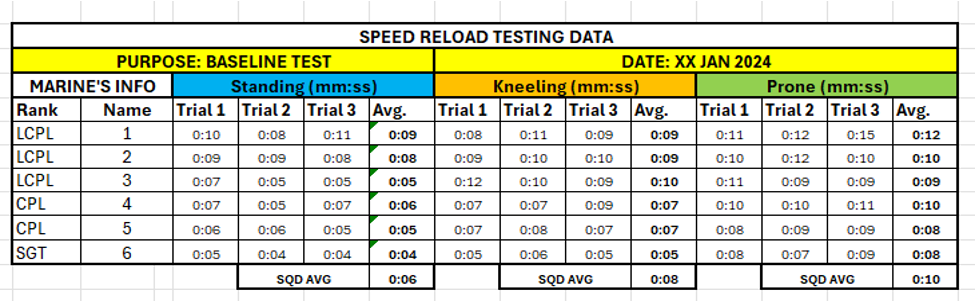

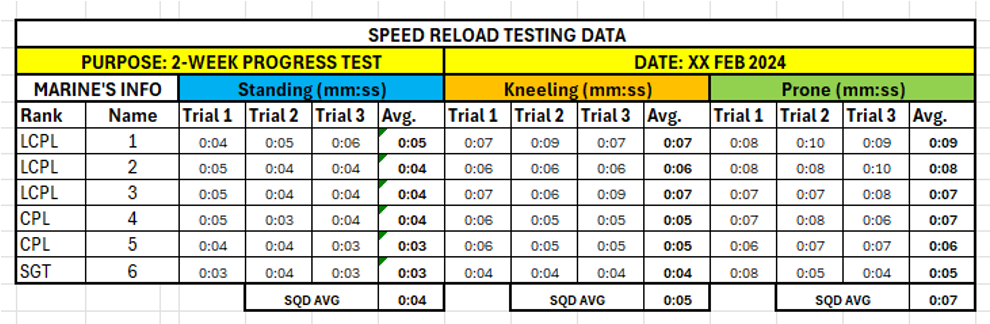

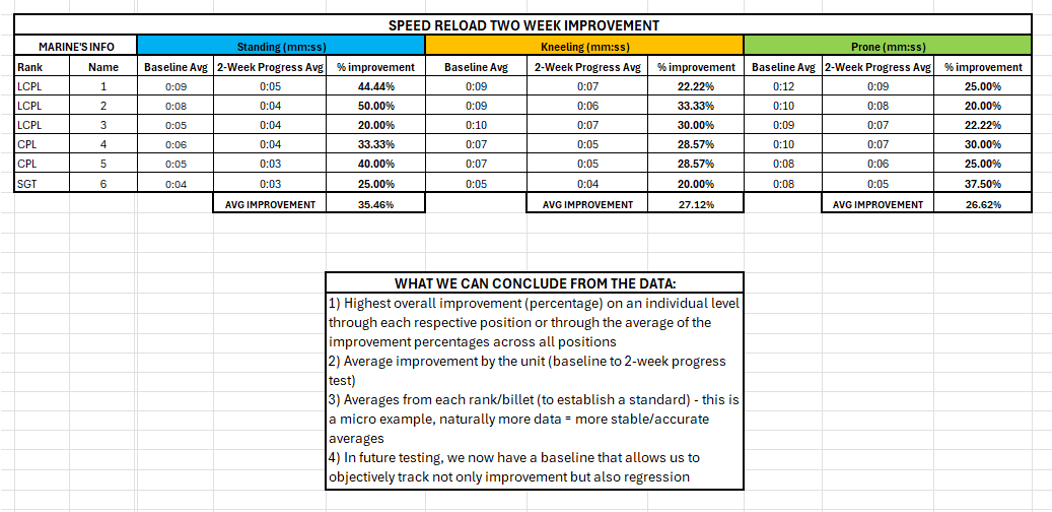

To establish our baseline, our experiment was simple in its design. We timed every Marine as they conducted multiple repetitions of both speed and tactical reloads from the standing, kneeling, and prone positions. This allowed us to calculate a reliable average time for each reload type in each position for every individual. This initial data set—far more nuanced than a simple pass/fail—became our objective starting point, from which we could then calculate averages for the entire platoon, by rank, and by billet.

With this baseline, we built a two-week training plan focused on deliberate repetition. Crucially, this data-driven approach empowered our Marines. During our “Fighting Load Optimization” classes, any Marine who could prove his preferred kit setup allowed him to meet or exceed the platoon’s reload averages was authorized to use it. This fostered buy-in, innovation, and a sense of ownership that a simple T&R checklist could never inspire.

The results were undeniable. At the end of two weeks, we didn’t just feel like we had improved –we had quantified our exact percentage of improvement. We validated our training plan not with anecdotal evidence, but with cold, hard numbers. This micro-level experiment proved a vital concept: many “unquantifiable” infantry skills can, in fact, be measured, and doing so drives real results.

Part III: The Macro-Level Vision — From Platoon Averages to Corps-Wide Standards

The data-driven approach, proven at the platoon level, achieves its true power when scaled across the force. We can start by better utilizing the data we already collect. While units rarely compare PFT/CFT averages today, the capability is inherent in the system. The data exists to identify the “fittest” company or battalion, allowing leaders to analyze what those high-performing units are doing in their physical training programs and apply those lessons elsewhere. Similarly, we already quantify individual marksmanship through the Annual Rifle Qualification (ARQ). But how many leadership teams are statistically comparing their company’s or battalion’s overall ARQ scores against their peers to benchmark performance and seek out the most effective training programs—proven by the statistics themselves?

These existing metrics, however valuable, have a fundamental limitation: they measure individual performance, not collective combat effectiveness. A unit’s lethality is not merely the sum of its members’ ARQ scores. This reveals the next critical step: we must begin to establish and track the statistics that aren’t currently measured. The skills that truly determine the outcome of a firefight—how a unit performs collectively under pressure—are the ones we currently fail to quantify.

This is the crucial distinction between individual marksmanship and unit-level fire and maneuver. An ARQ score captures none of this: not a fire team’s ability to gain and maintain fire superiority, a squad’s capacity to shift fires, or a platoon’s skill in distributing its weapons to suppress and neutralize an enemy. To measure this, we need new metrics. Modern automated targetry, already in use at various Marine Corps facilities, provides the tools to capture them:

Hit Percentage: The simple ratio of rounds fired to rounds on target across the entire unit.

Accuracy / Lethality: The percentage of hits within a vital, incapacitating zone, indicating how well the unit focuses its fires.

Time to Suppression: The time from target exposure to the first rounds impacting on or near the target array, measuring the unit’s speed in gaining fire superiority.

With this level of detail, we can move beyond the “pass/fail” T&R model and establish objective, data-backed standards for collective combat effectiveness. This approach directly supports the vision of Force Design 2030, which calls for a more technically advanced and data-driven force.

Imagine a Division-wide “Lethality Analytics Program.” By standardizing range scenarios, we could finally answer crucial questions:

What is a truly “good” performance? We could establish the actual average hit percentage, accuracy/lethality standards, and time-to-suppression for a rifle platoon at Range G-29, based on data from dozens of units.

Whose TTPs are most effective? If one battalion’s platoons average a 75% critical-zone hit rate while another averages 60%, leaders can direct the high-performing unit to share its marksmanship training plan with the entire regiment.

This is the future: moving beyond data existing in a micro-ecosystem and building an infantry that learns, adapts, and improves, based on objective proof.

Part IV: The Creative Imperative — Measuring the “Unmeasurable”

The transition to a data-driven culture is not a technological problem; it is a leadership challenge that demands creativity. For this to work, leaders from the team leader to the battalion commander must actively seek opportunities to quantify skills that have long been considered purely subjective. The ethos must be: “If it’s critical to combat, there must be a way to measure our proficiency in it.”

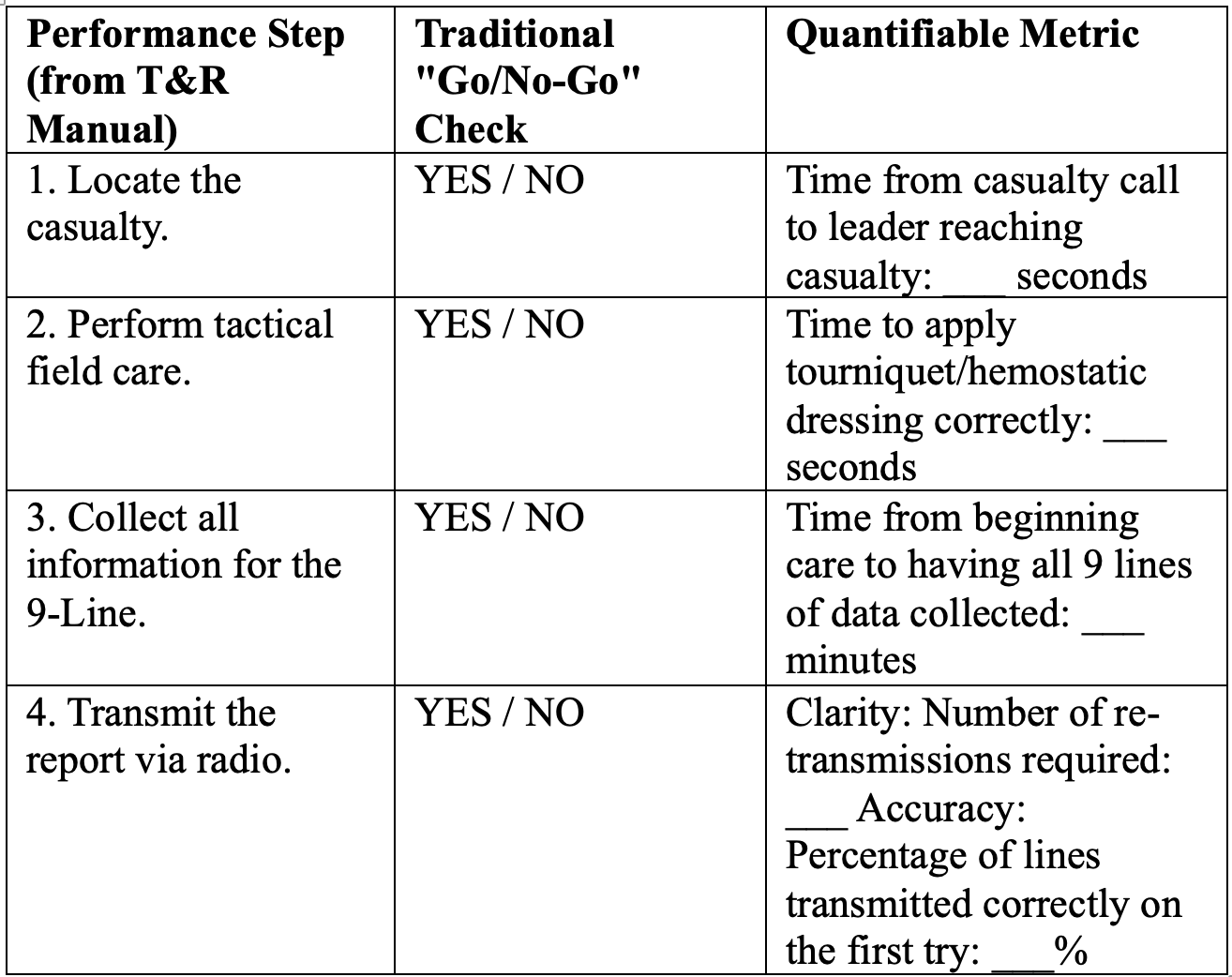

This doesn’t mean we have to reinvent the wheel. It begins by looking at the tools we already have, like the Performance Evaluation Checklists (PECLs) found in every USMC Training & Readiness (T&R) Manual, and asking not “Did the task get done?” but “How well did it get done?”

Consider a fundamental task: Requesting a Casualty Evacuation (CASEVAC). By creatively applying metrics to the existing PECL, we transform a simple checklist into a powerful data-gathering tool.

With this data, the subjective observation, “Sgt X sent a good CASEVAC 9-line,” becomes an objective assessment: “From the point of wounding, Sgt X transmitted a 100% accurate 9-line in 75 seconds, 30 seconds faster than the platoon average and with zero requests for re-transmission.”

This same mindset of applying metrics extends from individual tasks to our most complex, dynamic unit actions, where “good” is too often left to interpretation.

Squad Fire and Maneuver: Instead of just noting, “The squad was aggressive,” we can capture data that proves it by measuring metrics like Suppression Gap Time (the time between the support element’s last round and the maneuver element’s first), Rate of Advance (meters per minute), and Element “Set” Time (how quickly a bounding element is ready to support the next bound).

Obstacle Breaching (Wire): A “sloppy” breach can be fatal, but “sloppy” is not a useful metric. We can measure the components of the action: Breach Security Time (how long until the supporting unit achieves the required suppression), Lane Creation Time, and Personnel Throughput (the rate of Marines passing through the lane), which identify bottlenecks that a simple “go/no-go” would miss.

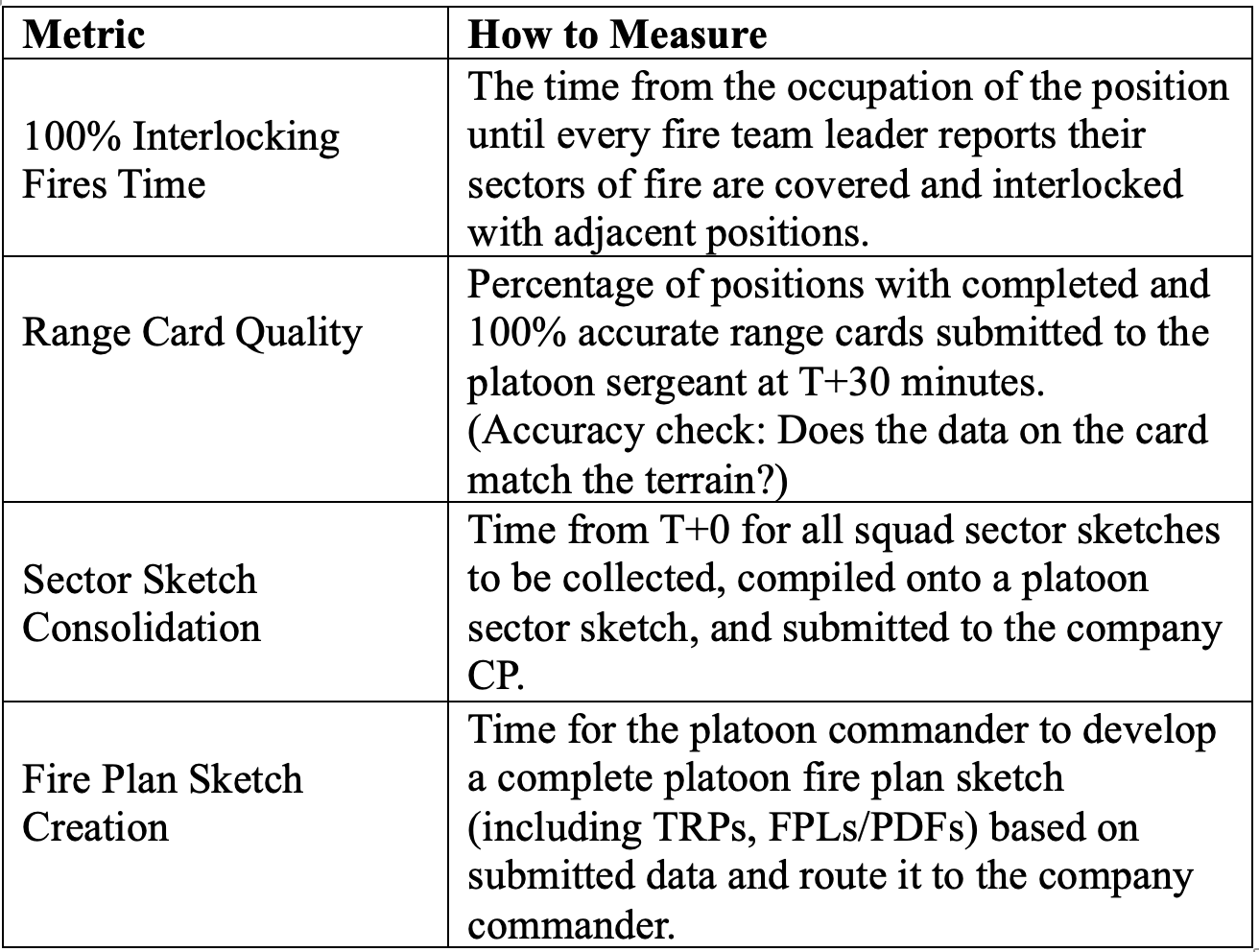

Establishing a Defense: Occupying a defense is more than just digging a hole. It’s a race against the enemy’s own decision-making cycle, where every second and every piece of information counts. We can measure the entire process, from individual actions to the platoon’s ability to report up the chain.

It must be acknowledged that certain infantry skills, like tactical judgment or leadership presence, may always contain a subjective element. The goal, then, is not to foolishly chase perfect quantification in all things, but to systematically reduce subjectivity wherever possible. Where a simple metric like time or accuracy is not feasible, we must create standardized, tangible scoring criteria—a rubric—that can be applied consistently across the force. This ensures that even a qualitative ‘score’ is based on a common understanding of performance. By standardizing these evaluation criteria and, most importantly, storing the resulting data, we can move beyond the realm of conflicting opinions and begin to track trends in performance, even for our most complex skills.

Without this creative and deliberate effort to assign tangible metrics to our core competencies, our understanding of how a unit is truly performing will remain subjective. The infantry community will remain stuck in a loop of conflicting opinions, unable to definitively prove our progress.

This is not a call to turn infantry leaders into data scientists but a challenge to be more deliberate and objective in how we prepare for war. The leader who can quantify his unit’s performance is the leader who can direct its improvement.

Conclusion

We have examined the inherent limitations of our current pass/fail culture and seen how a data-driven approach, proven at the platoon level, can be scaled across the force. This is not an argument for creating more bureaucracy or turning infantry leaders into data scientists. It is an argument for embracing a culture of objective self-assessment, where “we got better” is no longer an opinion, but a quantifiable fact.

The future of infantry lethality will not be determined by the unit that checks the most T&R tasks checked “green,” but by the one that can objectively prove its superiority long before the first shot is fired. The tools are available, the methodology is sound, and the need is clear.

The time to start counting is now.

1stLt Andrew K Danko serves as executive officer for Kilo Company, Battalion Landing Team 3d Battalion, 6th Marines

Here is my reply to Lt Danko’s article “Objective Lethality”

Good morning Sir,

I hope and pray you are well and enjoying a relaxing weekend. I enjoyed your recent article in the Connecting File, titled “Objective Lethality”

My name is Cannon Cargile, I am a retired Marine Gunner. I spent 30 years in the Marine Corps. I retired in 2013 as a CWO5 Marine Gunner and was the 2nd Marine Division Gunner. In 1998 I was selected to be a Gunner, my first tour as a Gunner was in V22 (1999-2003), then I served as v36’s Gunner (2003-2005), I was 6th Marines Gunner from 2005-2008, my last tour as a Gunner was as the 2nd Marine Div/ ll MEF FWD Gunner. I served 5 tours of duty to Iraq and Afghanistan. After I retired in 2013 I have worked as a training specialist for the Marine Corps. I work on the GCE T&R manuals. I work to help keep them updated and practical as well as ensuring our doctrine coincides with the T&R.

Please do not think I am trying to impress you with my bio. Not by a long shot. I am simply trying to emphasize the fact that I love the infantry and have spent the past 43 years of my life as an infantry Marine!

When I read your article my initial thought was “good” we have forward thinkers! Then I thought “bae!” In my humble opinion the fault does not reside in the step by step check list PECL of the T&R manual! It resides in the leaders who fail to be creative. They fail to see the performance steps in the T&R manual as the bare minimum! You as the leader can and should take the base model of a T&R event and create it into a practical evaluation designed to enhance the combat effectiveness and efficiency of individuals, teams, squads, platoon, companies, and Bn’s.

The things you described to improve the so called ineffective T&R are exactly what I think is expected of you as a leader of Marines! The T&R along with the unit METL is a basic guide to what must be accomplished and is reportable for combat readiness!

In football a coach designs a play book! In his playbook he has written out all the plays with details of each individuals actions and how those cumulative actions achieve the desired results of the team! However, as soon as the ball is snapped things change creative adaptability occurs!

Our leaders as forward thinkers do the best they can to plan training with creative adaptability in mind! They take a basic T&R event and turn it into a practical evaluation designed to train and maintain combat success! We are only limited by our own imaginations.

The actions you described are awesome and are exactly what I would expect from a platoon and Company Commander. Keep up the great work my friend.

Just my thoughts my brother!

Semper Fidelis and God bless

Cannon Cargile

One thing to consider is how these metrics feed into DRRS without flattening them to a Yes/No binary.